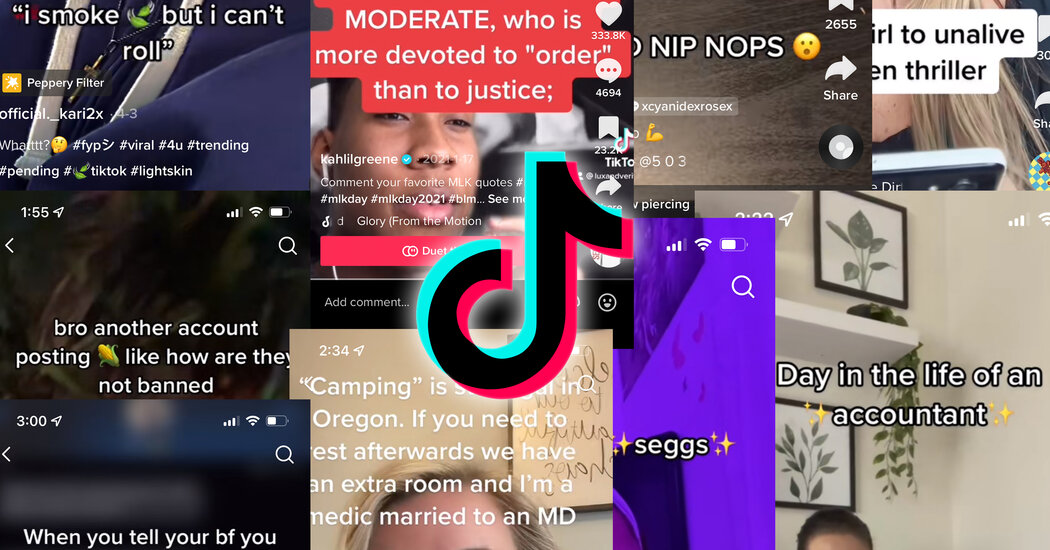

To hear some people on TikTok tell it, we’ve spent years in a “panoramic.” Or perhaps it was a “panini press.” Some are in the “leg booty” community and stand firmly against “cornucopia.”

If it all sounds like a foreign language to you, that’s because it kind of is.

TikTok creators have gotten into the habit of coming up with substitutes for words that they worry might either affect how their videos get promoted on the site or run afoul of moderation rules.

So, back in 2021, someone describing a pandemic hobby might have believed (perhaps erroneously) that TikTok would mistakenly flag it as part of a crackdown on pandemic misinformation. So the user could have said “panoramic” or a similar-sounding word instead. Likewise, a fear that sexual topics would trigger problems prompted some creators to use “leg booty” for L.G.B.T.Q. and “cornucopia” instead of “homophobia.” Sex became “seggs.”

Critics say the need for these evasive neologisms is a sign that TikTok is too aggressive in its moderation. But the platform says a firm hand is needed in a freewheeling online community where plenty of users do try to post harmful videos.

Those who run afoul of the rules may get barred from posting. The video in question may be removed. Or it may simply be hidden from the For You page, which suggests videos to users, the main way TikToks get wide distribution. Searches and hashtags that violate policies may also be redirected, the app said.

When people on TikTok believe their videos have been suppressed from view because they touched on topics the platform doesn’t like, they call it “shadow banning,” a term that also has had currency on Twitter and other social platforms. It is not an official term used by social platforms, and TikTok has not confirmed that it even exists.

The new vocabulary is sometimes called algospeak.

It’s not unique to TikTok, but it is a way that creators imagine they can get around moderation rules by misspelling, replacing or finding new ways of signifying words that might otherwise be red flags leading to delays in posting. The words could be made up, like “unalive” for “dead” or “kill.” Or they could involve novel spellings — le$bian with a dollar sign, for example, which TikTok’s text-to-speech feature pronounces “le dollar bean.”

In some cases, the users may be just having fun, rather than worrying about having their videos removed.

A TikTok spokeswoman suggested users may be overreacting. She pointed out that there were “many popular videos that feature sex,” sending links that included a stand-up set from Comedy Central’s page and videos for parents about how to talk about sex with children.

Here’s how the moderation system works.

The app’s two-tier content moderation process is a sweeping net that tries to catch all references that are violent, hateful or sexually explicit, or that spread misinformation. Videos are scanned for violations, and users can flag them. Those that are found in violation are either automatically removed or are referred for review by a human moderator. Some 113 million videos were taken down from April to June of this year, 48 million of which were removed by automation, the company said.

While much of the content removed has to do with violence, illegal activities or nudity, many violations of language rules seem to dwell in a gray area.

Kahlil Greene, who is known on TikTok as the Gen Z historian, said he had to alter a quote from the Rev. Dr. Martin Luther King Jr.’s “Letter From Birmingham Jail” in a video about people whitewashing King’s legacy. So he wrote the phrase Ku Klux Klanner as “Ku K1ux K1ann3r,” and spelled “white moderate” as “wh1t3 moderate.”

In part because of frustrations with TikTok, Mr. Greene started posting his videos on Instagram.

“I can’t even quote Martin Luther King Jr. without having to take so many precautions,” he said, adding that it was “very common” for TikTok to flag or take down an educational video about racism or Black history, wasting the research, scripting time and other work he has done.

And the possibility of an outright ban is “a huge anxiety,” he said. “I am a full-time content creator, so I make money from the platform.”

Mr. Greene says he earns money from brand deals, donations and speaking gigs that come from his social media following.

Alessandro Bogliari, the chief executive of the Influencer Marketing Factory, said the moderation systems are clever but can make mistakes, which is why many of the influencers his company hires for marketing campaigns use algospeak.

Months ago, the company, which helps brands engage with younger users on social media, used a trending song in Spanish in a video that included a profane word, and a measurable decrease in views led Mr. Bogliari’s team to believe it had been shadow-banned. “It’s always difficult to prove,” he said, adding that he could not rule out that the overall audience was down or the video was simply not interesting enough.

TikTok’s guidelines do not list prohibited words, but some things are consistent enough that creators know to avoid them, and many share lists of words that have triggered the system. So they refer to nipples as “nip nops” and sex workers as “accountants.” Sexual assault is simply “S.A.” And when Roe v. Wade was overturned, many started referring to getting an abortion as “camping.” TikTok said the topic of abortion was not prohibited on the app, but that the app would remove medical misinformation and community guidelines violations.

Some creators say TikTok is unnecessarily harsh with content about gender, sexuality and race.

In April, the TikTok account for the advocacy group Human Rights Campaign posted a video stating that it had been banned temporarily from posting after using the word “gay” in a comment. The ban was reversed quickly and the comment was reposted. TikTok called this an error by a moderator who did not carefully review the comment after another user reported it.

“We are proud that L.G.B.T.Q.+ community members choose to create and share on TikTok, and our policies seek to protect and empower these voices on our platform,” a TikTok representative said at the time.

Nevertheless, Griffin Maxwell Brooks, a creator and Princeton University student, has noticed videos with profanity or “markers of the L.G.B.T.Q.+ community” being flagged.

When Mx. Brooks writes closed captions for videos, they said they typically substitute profanity with “words that are phonetically similar.” Fruit emojis stand in for words like “gay” or “queer.”

“It’s really frustrating because the censorship seems to vary,” they said, and has seemed to disproportionately affect queer communities and people of color.

Will these words end up sticking around?

Probably not most of them, experts say.

As soon as older people start using online slang popularized by young people on TikTok, the terms “become obsolete,” said Nicole Holliday, an assistant professor of linguistics at Pomona College. “Once the parents have it, you have to move on something else.”

The degree to which social media can change language is often overstated, she said. Most English speakers don’t use TikTok, and those who do may not pay much attention to the neologisms.

And just like everyone else, young people are adept at “code switching,” meaning they using different language depending on who they’re with and the situations they find themselves in, Professor Holliday added. “I teach 20-year-olds — they’re not coming to class and saying ‘legs and booty.’”

But then again, she said, few could have predicted the endurance of the slang word “cool” as a marker for all things generally good or fashionable. Researchers say it emerged nearly a century ago in the 1930s jazz scene, retreated from time to time over the decades, but kept coming back.

Now, she said, “everybody that’s an English speaker has that word.” And that’s pretty cool.

Sumber: www.nytimes.com